Artificial After All is a collection of 256 autonomous artworks, deployed as individual smart contracts (Artificial) from a single parent contract (Artificial After All). Every piece contains a compressed neural network with its weights embedded directly in Ethereum bytecode and produces its image by computing from an immutable seed.

An Artificial contract includes built-in ownership and simple marketplace logic. It tracks ownership, allows the owner to set a price, and enables direct purchase, making the piece a self-contained computational and economic object.

Collectors in this system own the algorithm itself rather than a pointer. The artifact exists, operates, and transacts entirely through Ethereum network.

Introduction

What if the artwork is not a file, but a system? What if it exists as code, defines its own behavior, and produces itself through execution?

Artificial After All is built around this idea. A neural network is compressed and embedded directly into smart contract bytecode, forming a self-contained object that can compute, be owned, and be traded without relying on any external system.

System Overview

The system consists of two layers:

ArtificialAfterAll.sol — The parent contract that maps a finite latent space into deployed instances.

Artificial.sol — Individual contracts, each representing a single piece.

The parent defines a fixed supply of 256. Each mint creates a new contract with a unique seed derived at creation time and contains:

- A compressed neural network (~15K parameters)

- An inference engine implemented in Solidity

- A deterministic rendering pipeline

- Built-in ownership and marketplace logic

There is no shared state between Artificial contracts. Each one exists independently.

Training

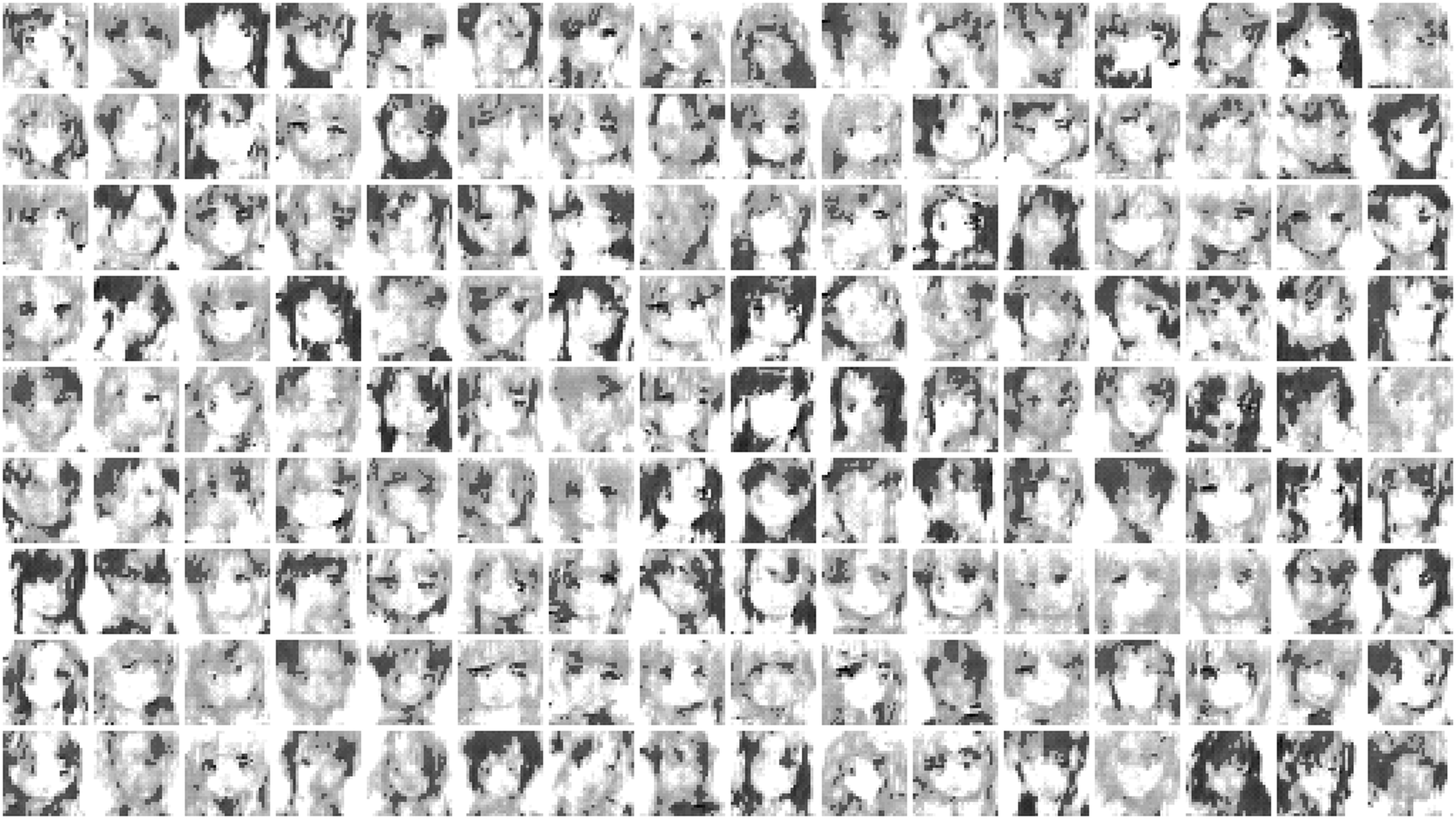

The model is a generative adversarial network trained on 43,000 anime faces. Training used quantization-aware fine-tuning, where the network learned to produce images under the same integer arithmetic constraints it would face onchain. The weights were not approximated after training. The int8 model that completed quantization-aware training is the model that was deployed.

After training, the 15,657 parameters were compressed using Huffman coding and embedded directly into contract bytecode. The compression is lossless, decompression happens onchain before each inference.

The choice of training data is part of the work. The model does not reproduce its training set. It learned a compressed representation of visual structure from those images and generates new outputs from that representation.

The Model as Artwork

The neural network is embedded directly in bytecode as compressed weight data. It is executed onchain using fixed-point arithmetic.

Calling the artwork function derives latent input from the seed, performs a forward pass, and applies normalization, shaping, and dithering to produce a 32×32 grayscale image. A 1-pixel border is applied, resulting in a 30×30 visible output.

The output is recomputed on every call.

Determinism and Permanence

Each artwork is defined by:

- An immutable seed

- Immutable model weights

- Deterministic computation

Given the same seed, the output will always be identical.

Sovereign Art Objects

The collector owns the actual sovereign digital being: bytecode, the weights, the inference engine, the rendering pipeline, the algorithm.

The Standard

The image emerges from learned representations through a continuous computational process. It is meant to be encountered as a whole. This is a deliberate choice.

Each piece is sovereign. Ownership, transfer, and sale are handled by the piece itself. It does not follow the ERC-721 model, nor include attributes or bidding systems. Buy and sell are embedded directly into each contract.

The intent is not to withhold, but to preserve a direct relationship between the viewer and the output, between a person and a computed image.

Finite Latent Space

The collection is limited to 256 instances.

The parent contract acts as a registry that maps a finite latent space into deployed contracts. Each mint consumes one position in this space and instantiates a new object.

Distribution Model

The first 64 of the collection are reserved for the artist. These pieces will be minted first and cannot be bypassed. The artist will decide whether to keep all of them or gift some to patrons.

After this initial phase:

- The remaining pieces are publicly mintable for 0.128 ETH.

- All participants mint under the same conditions.

Technical Constraints

The network architecture was designed to fit within Ethereum's 24KB contract size limit. The model was trained using quantization aware fine-tuning. The network learned to produce images under integer arithmetic constraints, rather than having its weights approximated after training. The weight precision, layer dimensions, and activation functions were all chosen with the deployment target in mind.

Running a neural network fully onchain introduces constraints:

- No floating point operations → fixed-point math

- Strict gas limits → compressed weights and optimized loops

- Memory limitations → minimal buffer design

- Bytecode size limits → ~24kb

After training locally, the weights were compressed using canonical Huffman coding, reducing 15,657 parameters into dense bytecode that is decompressed at inference time. The inference engine itself is written in Solidity assembly, optimized inner loops with incremental pointer arithmetic and output-channel unrolling to stay within gas limits.

The system is designed specifically around these constraints, not abstracted away from them. The offchain work exists to make the onchain execution possible. There will always be artifacts, whether the model runs in an optimal environment or within this constrained onchain setting. These deviations are not flaws to eliminate but inherent qualities of the system. The unexpected outputs, the distortions, the moments where the model misinterprets its own latent space, these are embraced as part of the work. I am drawn to these irregularities; they reveal the system thinking beyond intention, producing results that are not entirely predictable, yet still fully its own.

On Characters

The work draws from the visual language of anime faces as a familiar structure through which identity can emerge. The face becomes a minimal surface for expression.

After years of working through systems, abstractions, and non-figurative outputs, this marks a shift. For the first time, my work engages directly with identity and character. I am glad to arrive at this point, where these forms exist as computational formations discovered within the latent space.

The Name

The images are artificial, the intelligence is artificial, the art is artificial.

And yet something emerges. A model trained on tens of thousands of faces develops its own internal representation of what a face is. It computes something that did not exist before, from structure it was never explicitly given. The output is synthetic, but the process that produces it is not trivial. There is a learned compression of visual reality inside and it runs every time when its called.

And the most beautiful part of all, this happens in a virtual world computer.

"The electric light did not come from the continuous improvement of candles." — Oren Harari